Now that we're about to support unit tests in Orxonox, new questions arise. Who should run those tests? When should they be run? What happens if they fail?

In software engineering, one considers

continuous integration as "best practice". In short this means that developers commit frequently to a shared repository (in our case: SVN). After each commit (or sometimes only once per day) a server builds the project and runs automated tests. It provides a dashboard where developers can observe the progress of the development and the check if all tests were successful.

(Feel free to follow the link above to read more about this topic on wikipedia.)

There is a number of software tools for continuous integration, both open source and propertary. Among the open source tools I already heard about

- Cruise Control

- Hudson

- Jenkins

At work I use

Bamboo, a propertary tool.

They all offere more or less the same features: Defining build plans (which repository to check out, how to build it, how to run tests), project overview (does it build, were all tests successful) and often some nice graphical charts that show the progress over time. They mostly differ in terms of usability and esthetic appeal.

Today I gave

Hudson a try, mostly because it's easy to set up and I had some evidence that it would work in combination with google-test. Now that I know how to set it up, I'm pretty sure google-test would also work with any other common tool because it has a commandline option to create an XML report after running the tests. This report is written in a more or less standardized format, so we're thus still open to try some other tools (e.g. Cruise Control). But for the moment Hudson will do the job.

I set it up to build the "testing" Branch (the only one which contains unit tests so far) and it worked almost instantly, I only had to tweak some of my tests to run on Linux (I only tested it on Windows until then). It runs on Port 8080 on our server, but it's not public yet (you could connect with an SSH tunnel). Below are some screenshots.

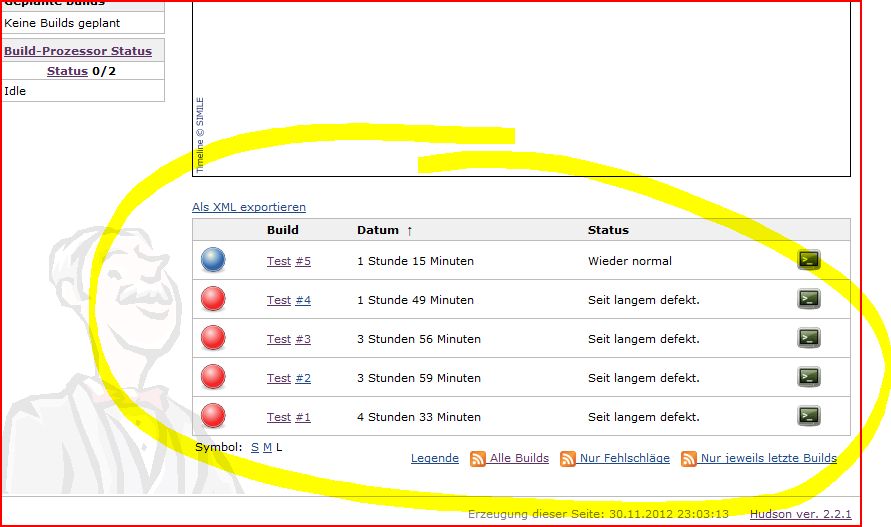

The build overview: You can see 4 failed (red) tests, the 5th worked (blue). I assume it's optimized for red-green-colorblind people, hence the blue color for success.

- hudson1.JPG (73.92 KiB) Viewed 30738 times

The details of the 5th build:

You can see my commit 9479 that made it work plus the information that all tests were successful.

- hudson2.JPG (69.29 KiB) Viewed 30738 times

A simple graphical chart that shows the execution time (or the number) of the tests (there were only tests executed in builds 4 and 5, hence there are only two samples visible). Obviously this doesn't make too much sense now (with execution times < 0.1s), but it could be handy later.

- hudson3.JPG (67.89 KiB) Viewed 30738 times

That's it so far, it's not much but it's a beginning.

We can do more fancy stuff with continuous integration: Measuring unit test code coverage (how much of our code is tested), computing software quality metrics (do we adhere to common standards), or building packages (e.g. nightly builds).

By the way, I just realized that the screenshots are in german. Sorry for this, but I think it doesn't really matter at the moment.